AI Gets Brainier: Groundbreaking Tech Makes Models Leaner, Faster & Cheaper

Training powerful AI models usually means huge bills, endless hours, and massive energy use. But now, groundbreaking new research from MIT could change everything. They've found a way to make AI models leaner and faster while they're still learning.

The Game-Changing Tech

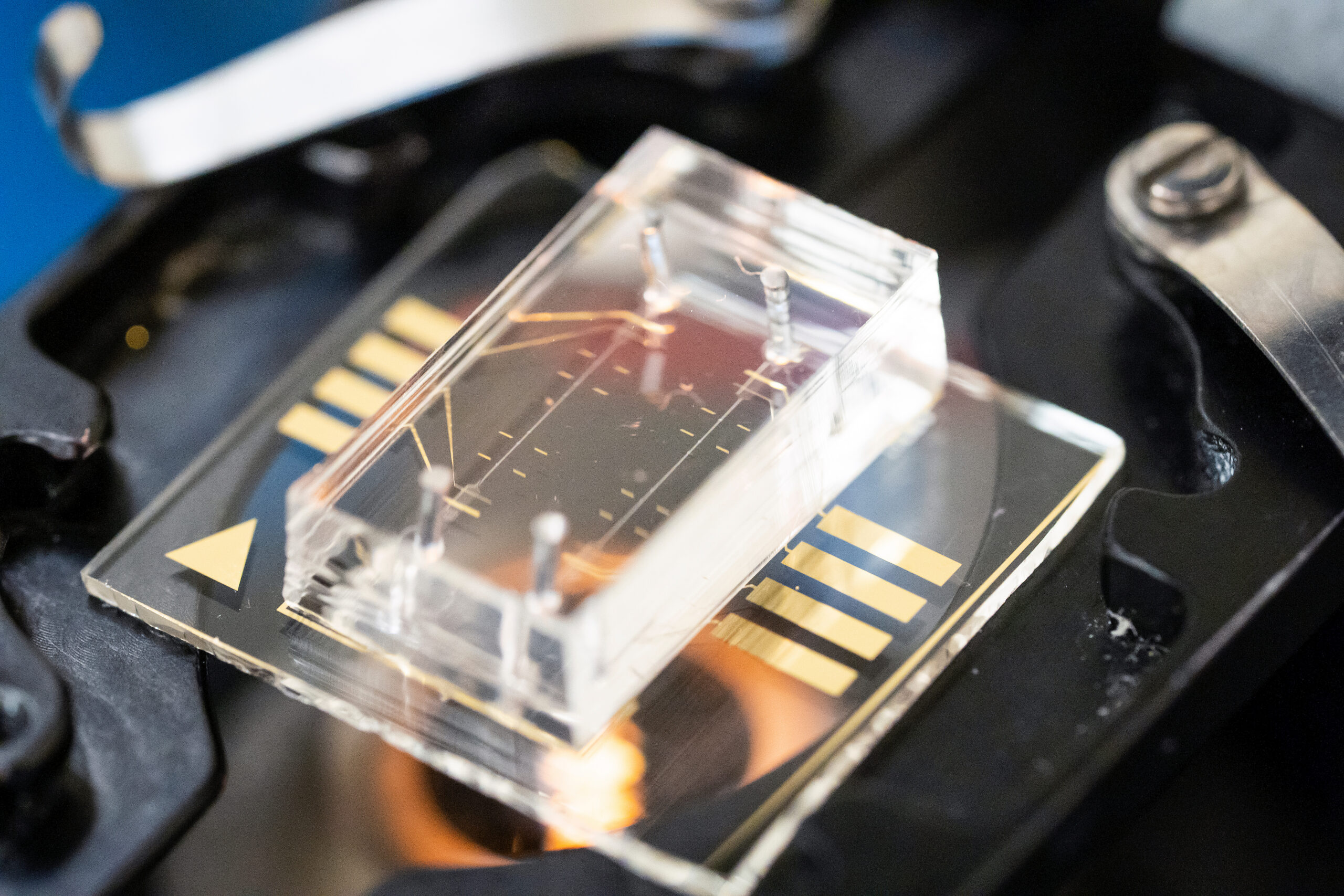

Forget the old ways of building AI. Previously, you'd either train a giant model and chop it down afterwards, or start small and accept weaker results. Now, boffins at MIT's Computer Science and Artificial Intelligence Laboratory (CSAIL) have cooked up CompreSSM.

This clever new method compresses models during training. It saves a heap of time and cash. CompreSSM targets specific AI architectures known as state-space models, used in everything from language apps to robotics.

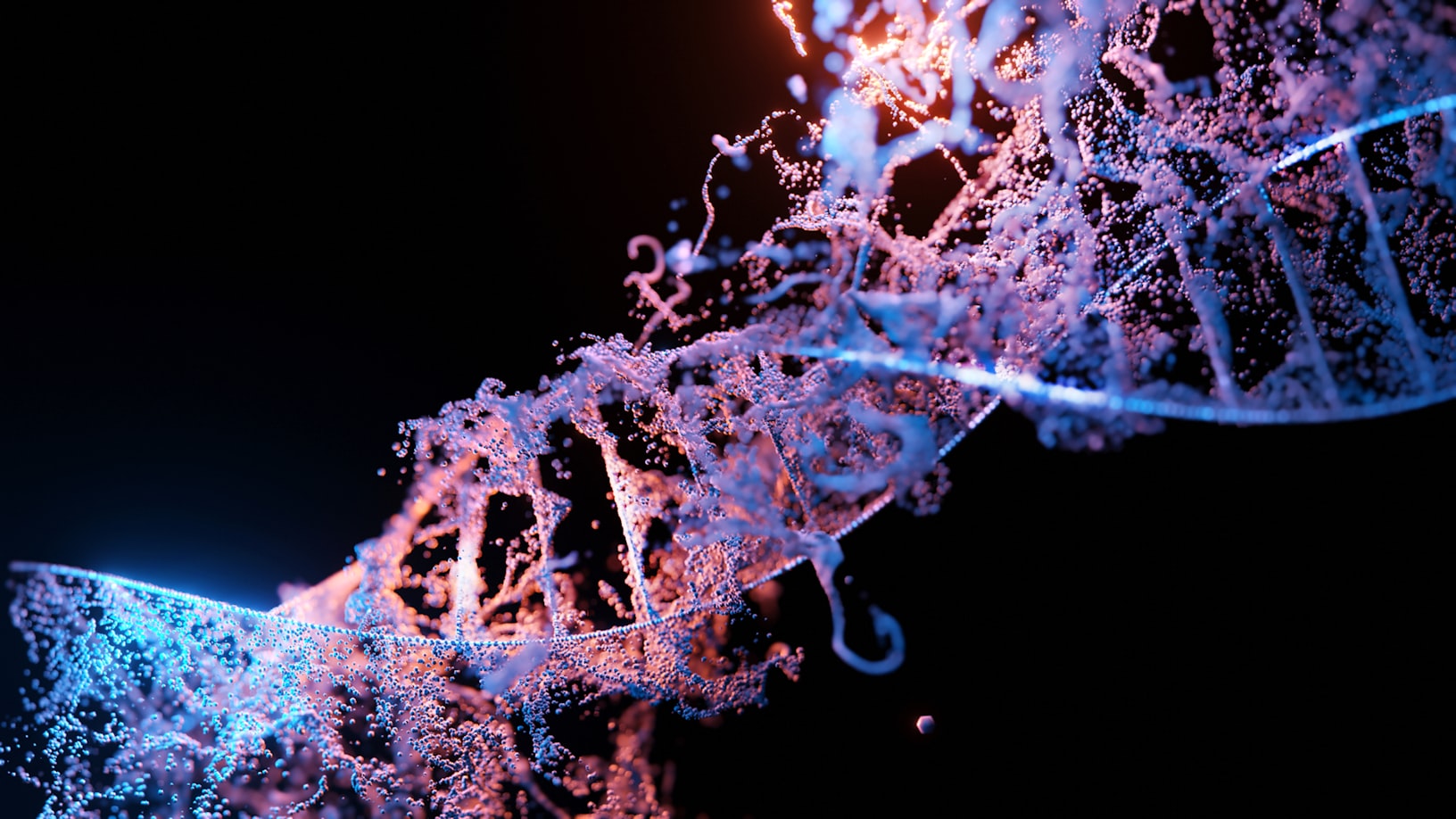

Unpacking the Brain-Box

CompreSSM helps AI models figure out which parts are useful. It identifies and surgically removes the unnecessary bits early in the learning process.

'It's essentially a technique to make models grow smaller and faster as they are training,' explains Makram Chahine, a PhD student and lead author of the paper. 'During learning, they're also getting rid of parts that are not useful to their development.'

The genius part? This 'weight loss' happens surprisingly early. Within just 10 percent of the training, the system can reliably rank which bits of the model are crucial and which aren't.

Speed & Savings

The results are seriously impressive. On image classification tests, these compressed models trained up to 1.5 times faster. Crucially, they kept almost identical accuracy compared to their full-sized counterparts.

For instance, a model shrunk to just a quarter of its original size still hit 85.7 percent accuracy on the CIFAR-10 benchmark. A model trained small from scratch only managed 81.8 percent.

'What's exciting about this work is that it turns compression from an afterthought into part of the learning process itself,’ said Daniela Rus, MIT professor and director of CSAIL. ‘Instead of training a large model and then figuring out how to make it smaller, CompreSSM lets the model discover its own efficient structure as it learns. That's a fundamentally different way to think about building AI systems.'

On Mamba, a widely-used AI architecture, the method delivered around 4x training speedups. It squashed a 128-dimensional model down to about 12 dimensions. All this was achieved while keeping competitive performance.

'You get the performance of the larger model, because you capture most of the complex dynamics during the warm-up phase, then only keep the most-useful states,' Chahine confirmed. 'The model is still able to perform at a higher level than training a small model from the start.'

What Makes it Different?

CompreSSM is smarter than traditional pruning. That approach means you train a giant model then cut it down, still paying the full cost. It also beats 'knowledge distillation', which basically means doubling your training effort.

Antonio Orvieto, an expert not involved in the research, called it an 'intriguing, theoretically grounded perspective on compression'. He believes it 'opens new avenues for future research' and could become a 'standard approach'.

There’s a handy safety net too. If compression causes a performance wobble, developers can easily revert. 'It gives people control over how much they're willing to pay in terms of performance,' Chahine noted.

Related Content

MORE: My PS4 Still Throwing Hands': Fans Applaud Epic’s Animation as Chappell Roan Joins Fortnite Festival — https://trendwiremedia.com/2026/02/05/my-ps4-still-throwing-hands-fans-applaud-epics-animation-as-chappell-roan-joins-fortnite-festival/

MORE: GROWING GILLS! Scots joke they are turning into FISH as yellow rain alerts spark flooding fears — https://trendwiremedia.com/2026/02/08/growing-gills-scots-joke-they-are-turning-into-fish-as-yellow-rain-alerts-spark-flooding-fears/

MORE: "They Are So Back": Fans Celebrate BlazBlue Entropy Effect X PS5 Story Reveal — https://trendwiremedia.com/2026/02/09/they-are-so-back-fans-celebrate-blazblue-entropy-effect-x-ps5-story-reveal/

OFFICIAL SOURCE VERIFICATION: This report is based on official data from MIT. Document: [New technique makes AI models leaner and faster while they’re still learning](https://news.mit.edu/2026/new-technique-makes-ai-models-leaner-faster-while-still-learning-0409) Source Link: https://news.mit.edu/2026/new-technique-makes-ai-models-leaner-faster-while-still-learning-0409

Subscribe for $2 every four weeks for the first six months

Subscribe for $20 every four weeks for the first six months